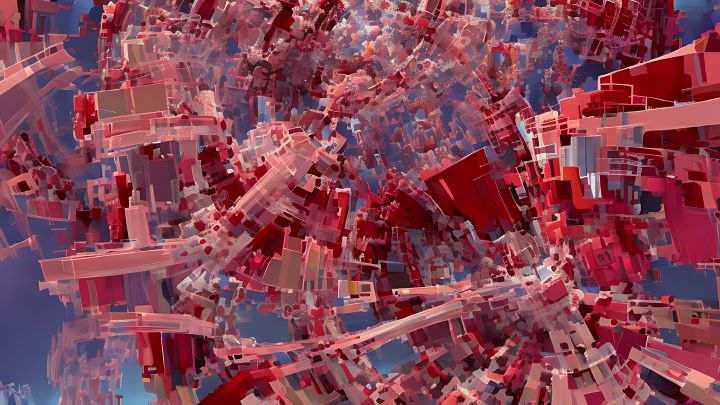

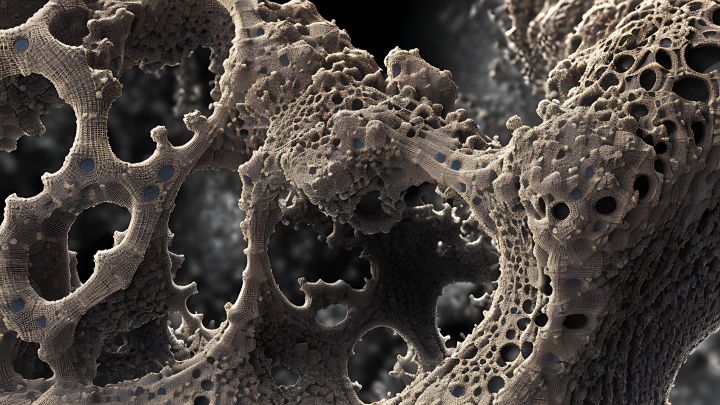

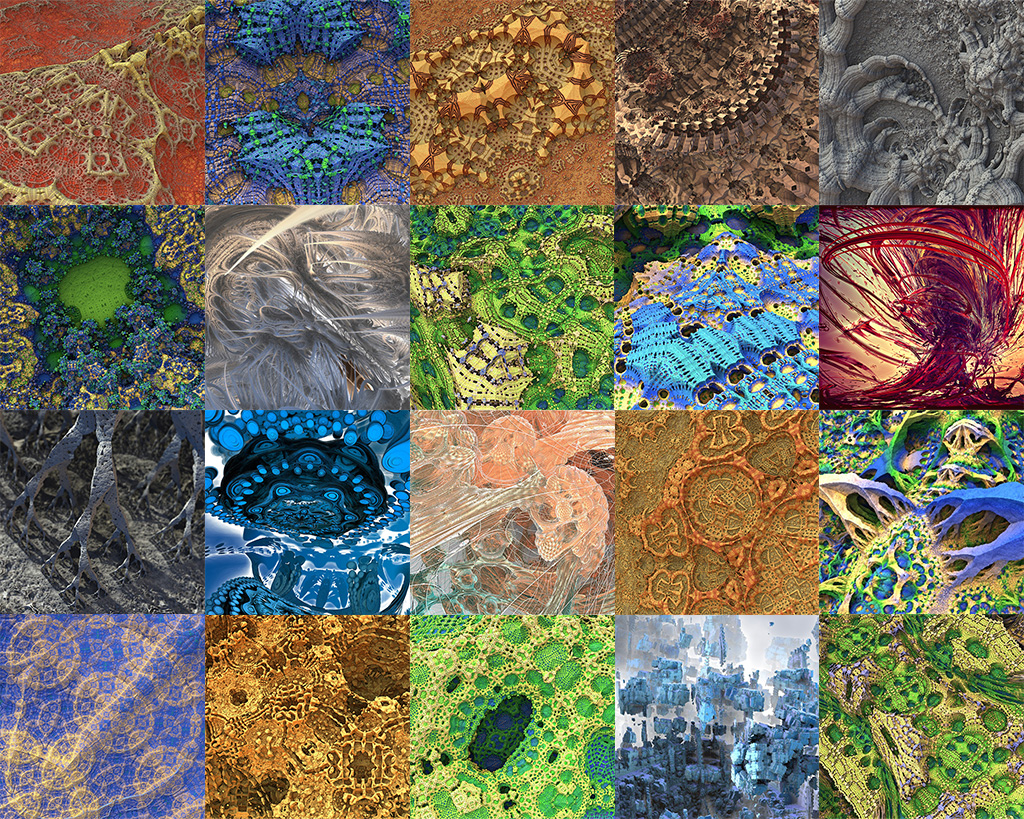

Over the course of a decade or more, I’ve gathered quite a few Mandelbulb 3d renders. I like using the ‘amazing surface’ formula a lot but was put down a bit by the obvious symmetry and geometry repetitions.

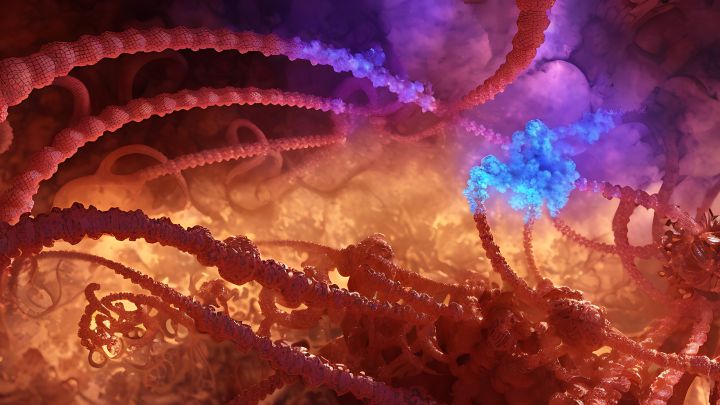

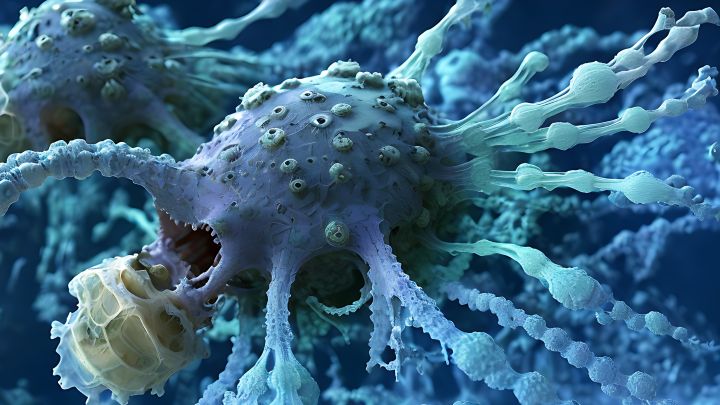

Nature is constructed with the same formula’s, but there are many outside elements and factors that play a role as well. If only those factors could be taken into account when rendering, that would be truly astounding.

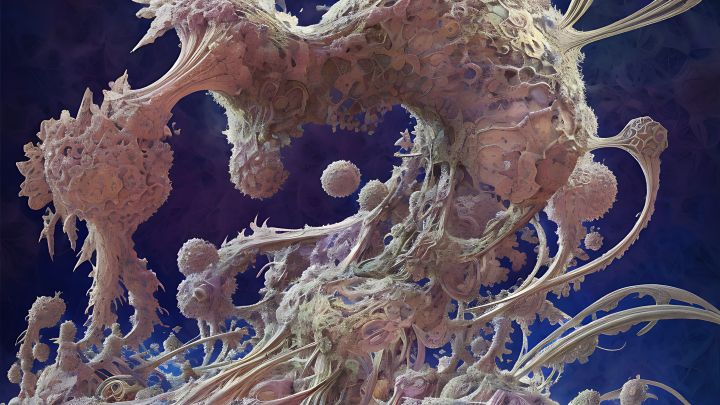

Fast-forward to today, and we have tools like Dreambooth that can train an AI model that can then be used to generate new images using prompts.

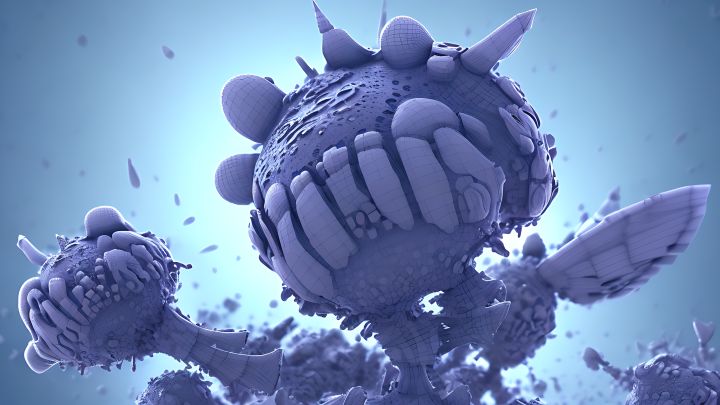

I put the procedure to the test on a selection of 20 fractal images, each 512 by 512 pixels. The resulting fractals are broken up in a more organic manner, and overall the images look more appealing and interesting.

After characters, fractals too can be used as training input for creating Stable Diffusion models, and I’m not quite done with it.

Enjoy!