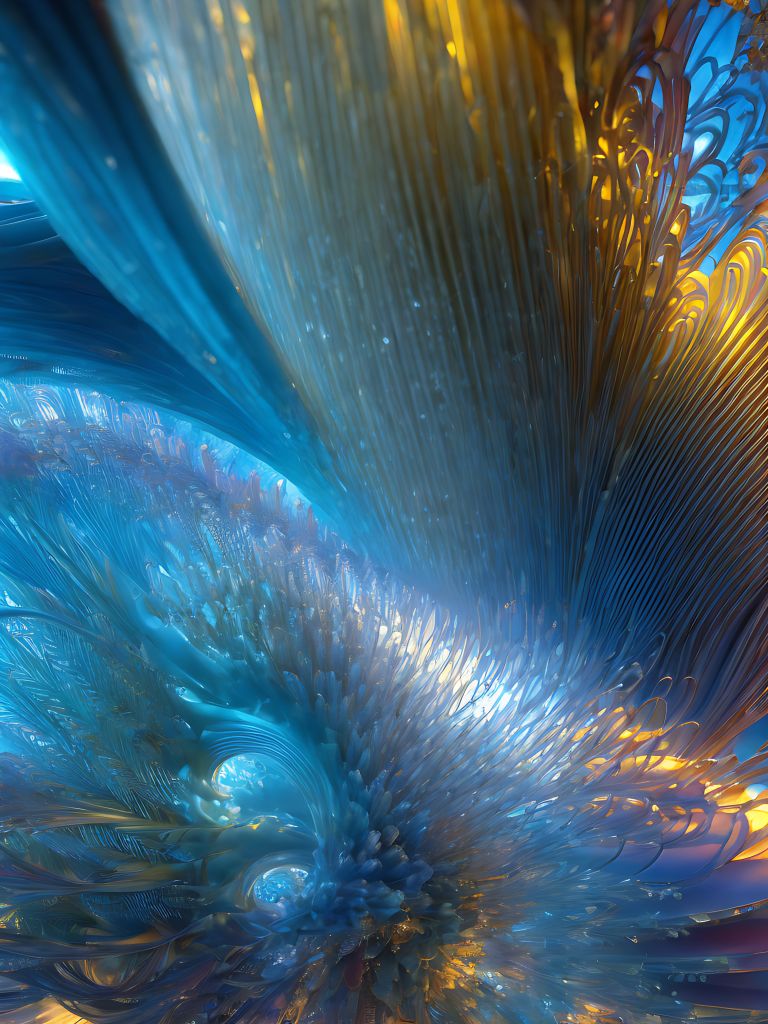

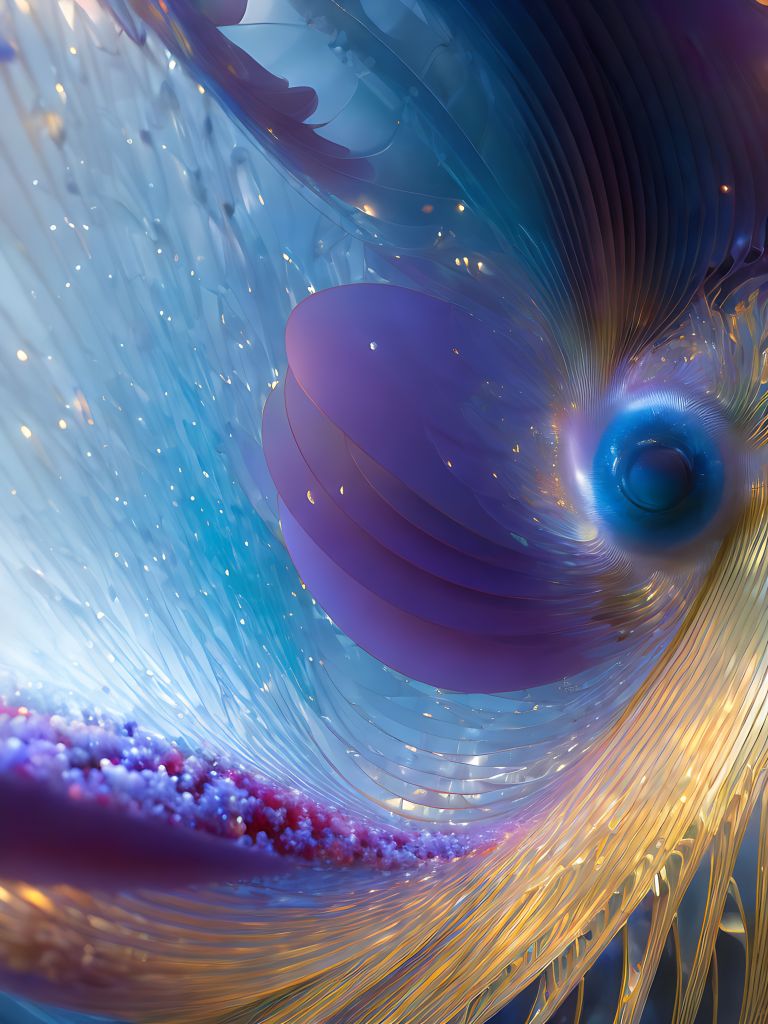

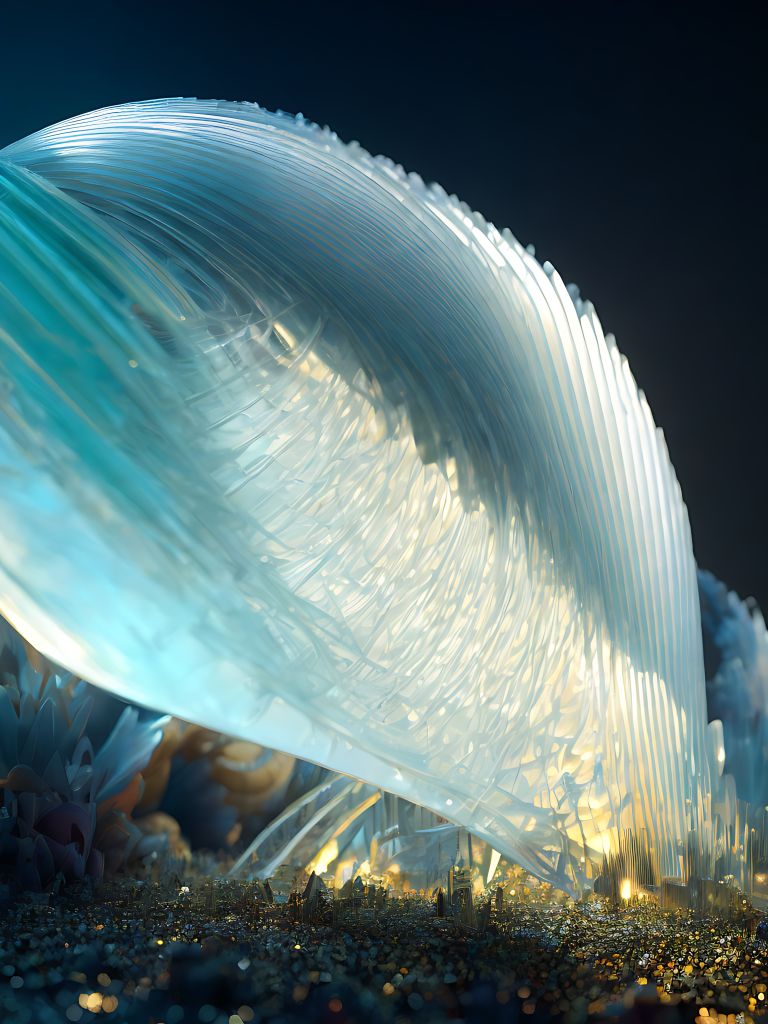

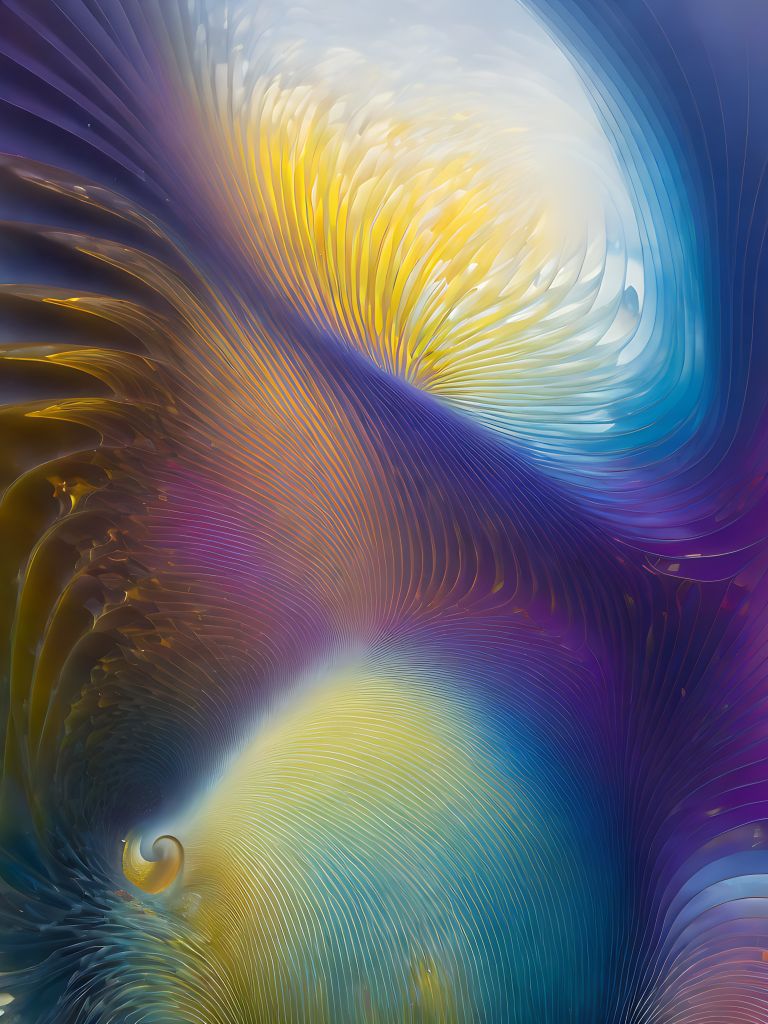

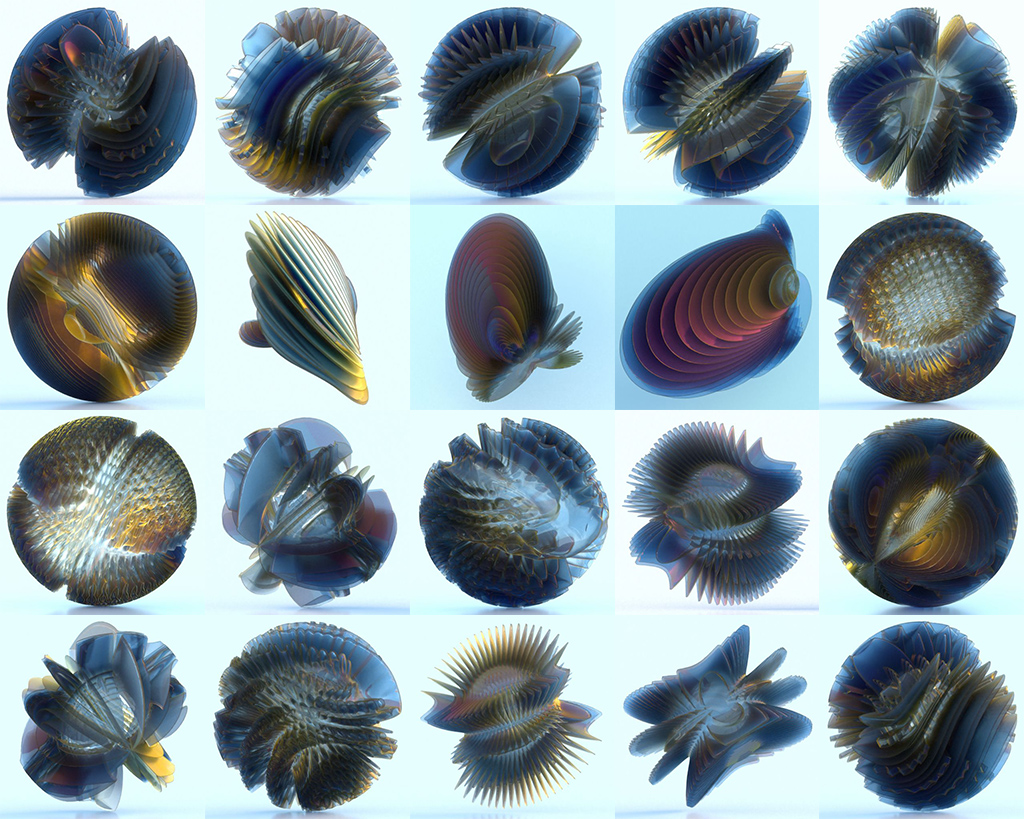

On the left are the renders I used to train a Stable Diffusion model with, using Dreambooth. The objects are created in Xenodream using Spherical Displacement. Once trained, I wrote a few prompts including the new keyword “xenodream” and let the AI generate new images.

The results below show that the model can freely apply the new input images to a variety of styles and subjects. On some occasions, we get to experience the objects on the inside, while we haven’t included any interior images in the training data.

It is known that Google’s Dreambooth works well with portraits and faces, but now I can safely say it works with abstract content too.

Enjoy the views!

Mark